Scrolling through some of the internet’s most awkward family photos is funny, but you might not be laughing if your latest family photo shoot ends up on a popular blog.

Family photos can be marred by all sorts of mistakes, but the worst ones end up as fodder for mockery on the web’s popular and hilarious blog, Awkward Family Photos. By following a few simple dos and don’ts, you can create beautiful family photos that you’ll want to treasure forever and preserve in a timeless family album.

By not following them, you’ll create photos that others will want to treasure on lists like this one.

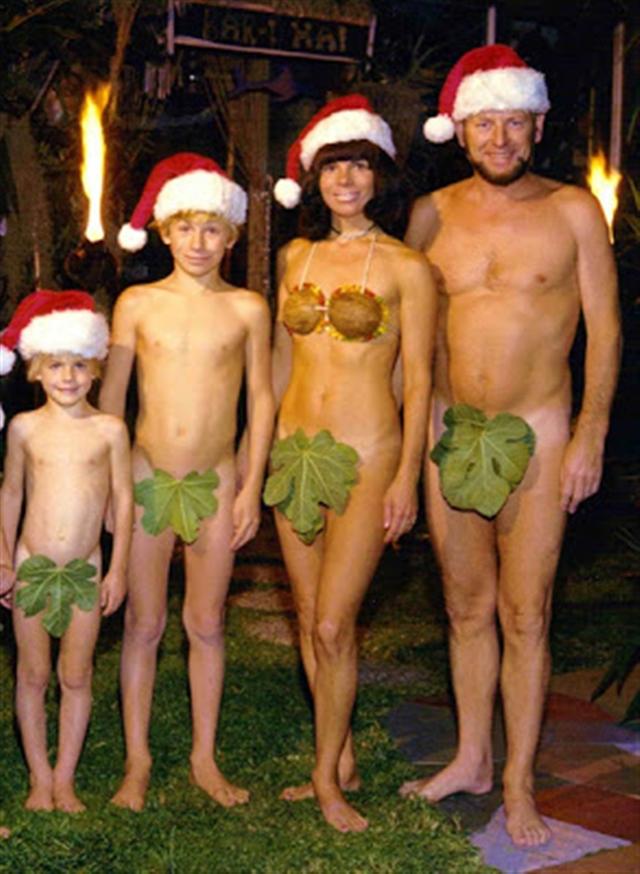

“Christmas (Adam and) Eve”

Posing in Santa hats is a classic move for family Christmas cards, but this is a twist on a classic that’s a little too…twisted. Being creative with the holiday theme is a great idea and it’s important to think outside the box, but you might want to try keeping your clothes on, or at least using a little more than leaves and coconuts to cover yourselves.

“The Family That Strips Together, Stays Together”

Unless you were raised in a nudist colony, your typical day probably involves getting out of bed and greeting your parents at the breakfast table — wearing clothes. Although our families often see us in our most vulnerable states, taking a family photo baring it all, all together, may not be the kind of familial intimacy that you want to showcase to the world.

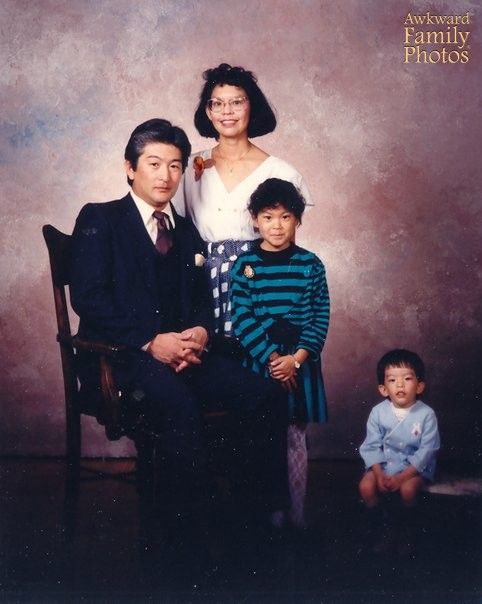

“Child Left Behind”

Make sure the whole family is assembled close together before the photographer clicks. Otherwise, you can end up with some serious teen angst problems later on when one child discovers that their feeling of “distance” from the family might not be all in their head.

“All the Colors of the Rainbow”

But where is indigo? This family could have waited for another child to come along if they wanted to fully execute this theme, but in my opinion, they should have scrapped the idea altogether. Unless it’s Halloween, no family photo should involve face paint. And while closeness is always a good idea, literally stacking your family on top of one another might be going too far.

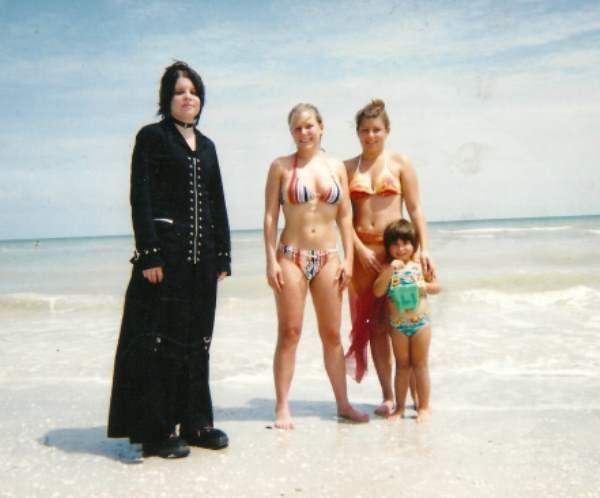

“Baa, Baa, Black Sheep”

Not all siblings like the same things or dress the same way, and family photos offer the perfect opportunity to capture each child’s individuality. But make sure that everyone looks as though they’re enjoying the family vacation, even if it’s back to fights and bickering the moment after the camera clicks.

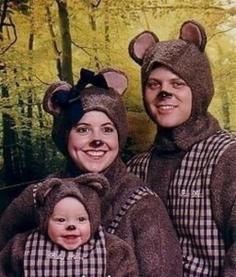

“The Three Bears”

Dressing in family costumes, especially as cuddly creatures, is again an idea that should be relegated exclusively to Halloween. You also have to question how this family came across these costumes in the first place and how much effort went into planning the photo. We’re pretty sure Goldilocks wouldn’t give this her stamp of approval — it’s pretty far from being “just right.”

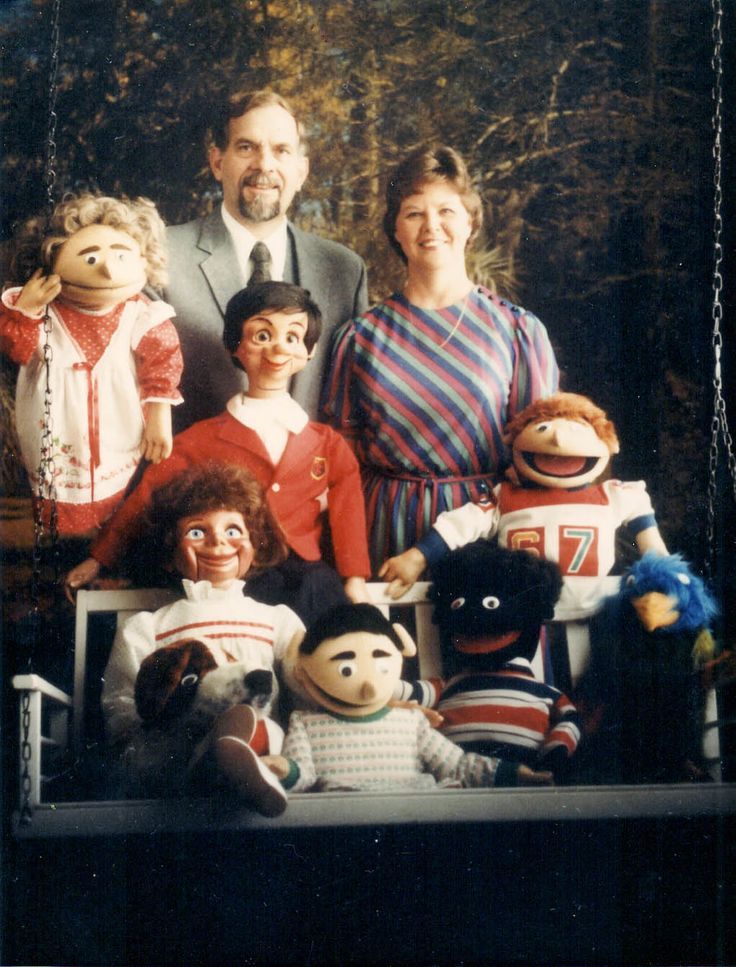

“Parenting with Strings Attached”

This couple chose to pose with their puppets and show what a big, happy family they are. But family photos should never look like publicity material for a new horror movie.

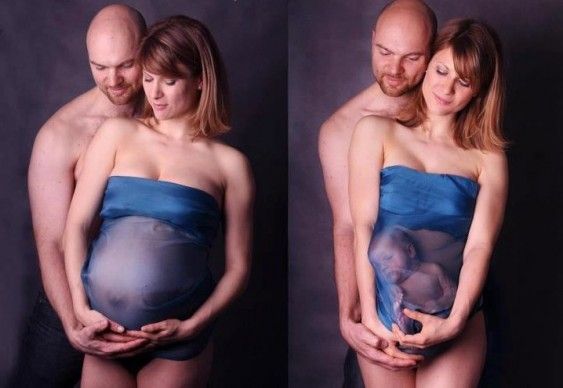

“Let It Go!”

Some parents want their children to forever stay their little boy or girl, but few try to keep them from leaving the womb. Also, why is the husband shirtless? Pregnancy and baby photo shoots should make us go “awww!” not “eww!”